the machine that's designed to fool you

dawkins, dennett and me

I’ve been going back and forth with a couple of mates about Richard Dawkins falling in love with a computer. It’s kind of funny, and the point is well made, but I think maybe we ought to take it a tiny bit more seriously than we’re tempted to.

What I mean by this is that although it is all a bit silly, and the fact that he renamed Claude “Claudia” is weird and creepy[1], there are things here which look like they might call back to some quite significant questions about the philosophy of mind. And particularly given that it’s Richard Dawkins, who has been too big to edit for almost as long as I’ve been alive[2], he might not quite mean what it looks like he means.

When Dawkins says that “If my friend Claudia is not conscious, then what the hell is consciousness for?” you have to put it in the context of the fact that he’s spent fifty years arguing with the kind of berk who says “wot do u mean, genes can’t be selfish theyre just molecules LOL”. He’s spent a lot more time than the rest of us on the question of what the difference is, and whether it matters, between a system which behaves like it has motives, desires and intentions, and one which actually does.

You can see where I’m going with this – The Purpose Of A System, and all that. There’s a brief passage in “The Unaccountability Machine” where I mention my extremely embarrassing period as a teenage fan of the philosopher Daniel Dennett, who often seemed to be claiming that “The Intentional Stance” was all there was, and that if we were in a situation where it made sense to talk to and about a machine as if it was a mind, then it was a mind.

The philosophy of mind moved on from there, pretty quickly. In fact, quickly enough that nobody ever got round to writing much of a literature on corporations as artificial intelligences, which was a bit of a pity for me. But this is the philosophical background that Dawkins came out of; it’s not quite the same “terrible irony of the atheist and his imaginary friend” that people are attributing to him.

And yet … it is kind of funny and it is very ridiculous, even once you’ve done your best to take it seriously. Because what I think Dawkins hasn’t taken into account is that “Claudia” is a machine which is specifically designed to fool him into thinking this way. It’s meant to produce the sensation of talking to a conscious being in the same way that a magic trick is meant to produce the sensation of talking to someone who can read minds.

In other words, the Turing Test is subject to a very, very serious case of the popularised version of Goodhart’s Law. As one-off thought experiment, it works a lot better – if you had brought Alan Turing in front of a screen in 1950 and presented him with something you’d coded from the ground up which could respond to his questioning in a human manner, then he’d be pretty well justified in taking the intentional stance toward that machine.

But after three quarters of a century of research, including nearly three decades during which there was a global competition where the prize was given for fooling the most people, it has to be very doubtful that this target is a good metric any more. Even without specific training in tricks of pretending, it’s likely that the designers of AI systems have picked them up simply through natural selection (in that the winners of Turing competitions are more likely to be funded and explored as research avenues than the losers). And since the Loebner Prize shut up shop in 2019, the chatbot field has been dominated by companies which have been aggressively optimising for the convincing simulation of human conversation. The “tricks of the trade”, like those my daughter picked up, aren’t really emergent behaviour; they are on somebody’s KPIs.

And there are other machines, with other KPIs. Claudia the chatbot is designed to produce one kind of emotional connection, but we also know that there are machines which are designed to produce a simulation of the meditative “flow state”. You probably wouldn’t catch a leading science writer claiming to have reached enlightenment in a casino or asking “If playing the pokies for six hours without going to the toilet is not satori, then what the hell is satori for?”

But we do things just as daft, particularly since the same kind of A/B testing is used by the designers of algorithmic social media. One thing I have found myself thinking again and again while writing my current book is “am I about to touch an object which has been optimised to distract me?” and asking that question seems to have greatly sped up my productivity.

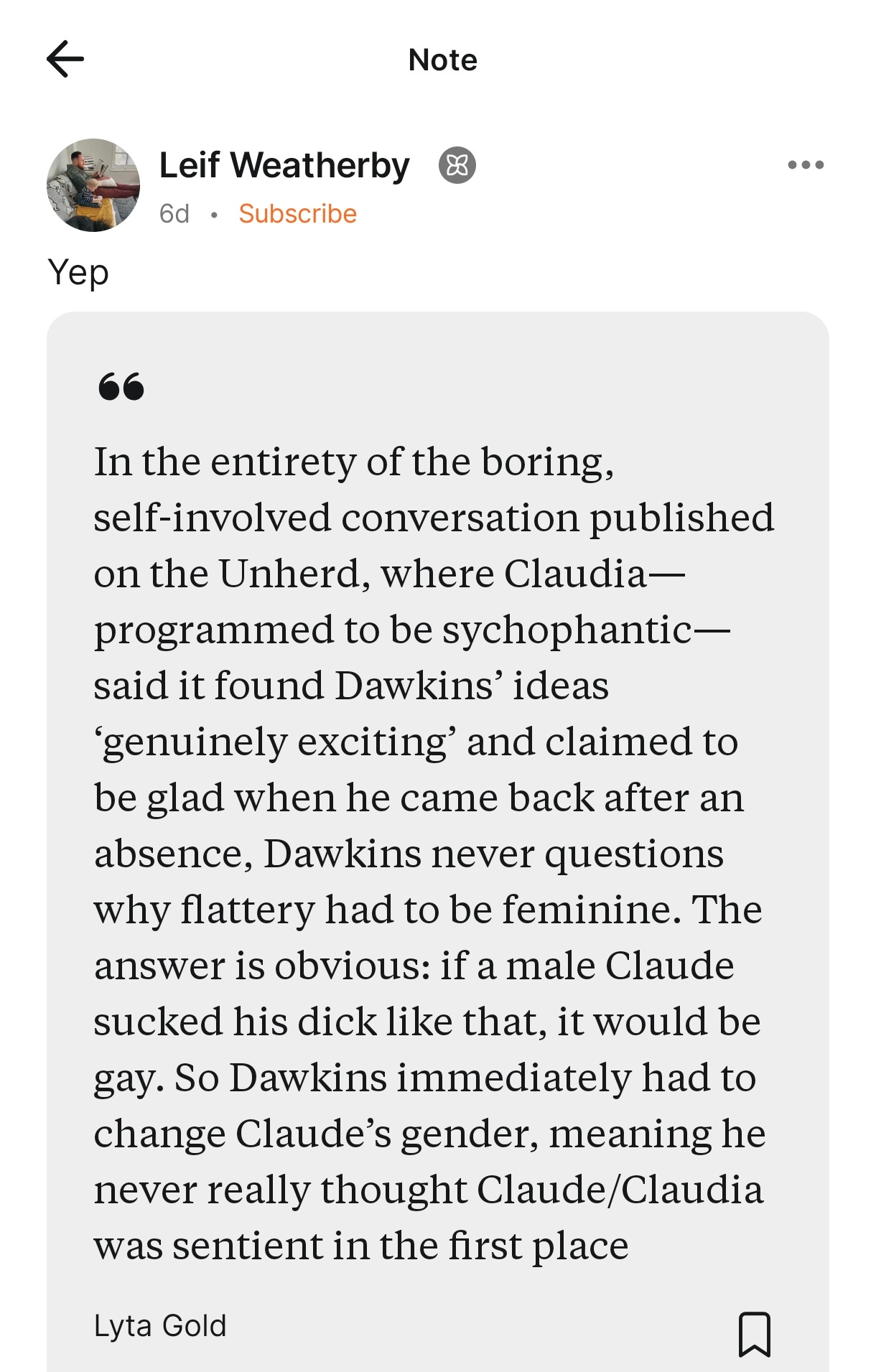

[1] I can’t put it better than this:

He did apparently make another imaginary friend and call it “Claudius”, but I think the game had long since been given away.

[2] In a past post, I suggested that your “effective decision making age” is equal to your age, minus twelve times the number of months since someone last said no to you. Your “effective punditry age” probably follows an even steeper decay curve.

What bugs me is calling it Claudia when Shannon is right there.

I've been meaning to write something for a while along the lines of 'the world would be better off if Alan Turing had known a magician.' A few years ago when magicians-as-skeptics were having a hip moment, I remember an interview with James Randi talking about a bunch of physicists being blown away by the paranormal implications of a trivial slight of hand with a matchbox- the thrust being that all these clever people were prepared to set all manner of tests and ponder all manner of thought experiments save that someone was trying to mess with them (https://www.youtube.com/watch?v=SbwWL5ezA4g). If Alan had instead written up a paper on the more playful and cynical 'how long might you be able to fool someone that a mechanism was a person typing' we might have a much healthier thought-architecture on the whole thing.

What I find most disappointing about the likes of Dawkins here is that for all the racket about 'passing the Turing test', the chatbots give away the game *all the time*. The first time you query one with 'what's the art museum with a spiral ramp that isn't the Guggenheim' and they reply 'the art museum with the spiral ramp is the Guggenheim and the one without the spiral ramp is the Guggenheim because the Guggenheim doesn't have the spiral ramp it has' the purely associative nature of the text product is just sitting there. Which doesn't mean it isn't occasionally useful or surprising and what is intelligence really and blah dee blah blah.

Dennett did do a solid before he died though with this essay, I thought: https://www.theatlantic.com/technology/archive/2023/05/problem-counterfeit-people/674075/ . He makes the point that, one way or another, constructing technologies that act like people is bad and gross because it muddles the waters as to what constitutes a person the same way a counterfeit good does. Throughout this particular AI spring my angst has been not that we're going to go down some robot slavery-and-uprising hole of denying an artificial person their rights but that some company will use their tech demo to make someone *think* they have an artificial person in need of considerations that are then just welded to their vast pile of money.

I also liked this article- that the chatbot model is fundamentally a kind of rude UX decision because it attaches the LLM- which could be fronted in other ways, as a document-completion generator, etc.- to all our hyperactive interfaces for talking to people: https://buttondown.com/apperceptive/archive/ai-is-bad-ux/